LLMs ("AI") and Hallucinations

- Published:

- • content production

- Updated:

Large language models (LLMs) are some of the most impressive tools in today's AI landscape. They can write essays, draft code, summarize articles, and even hold conversations that feel natural. But there's a catch: sometimes they simply make things up. This tendency is known as hallucination - and it's one of the biggest challenges in making AI reliable.

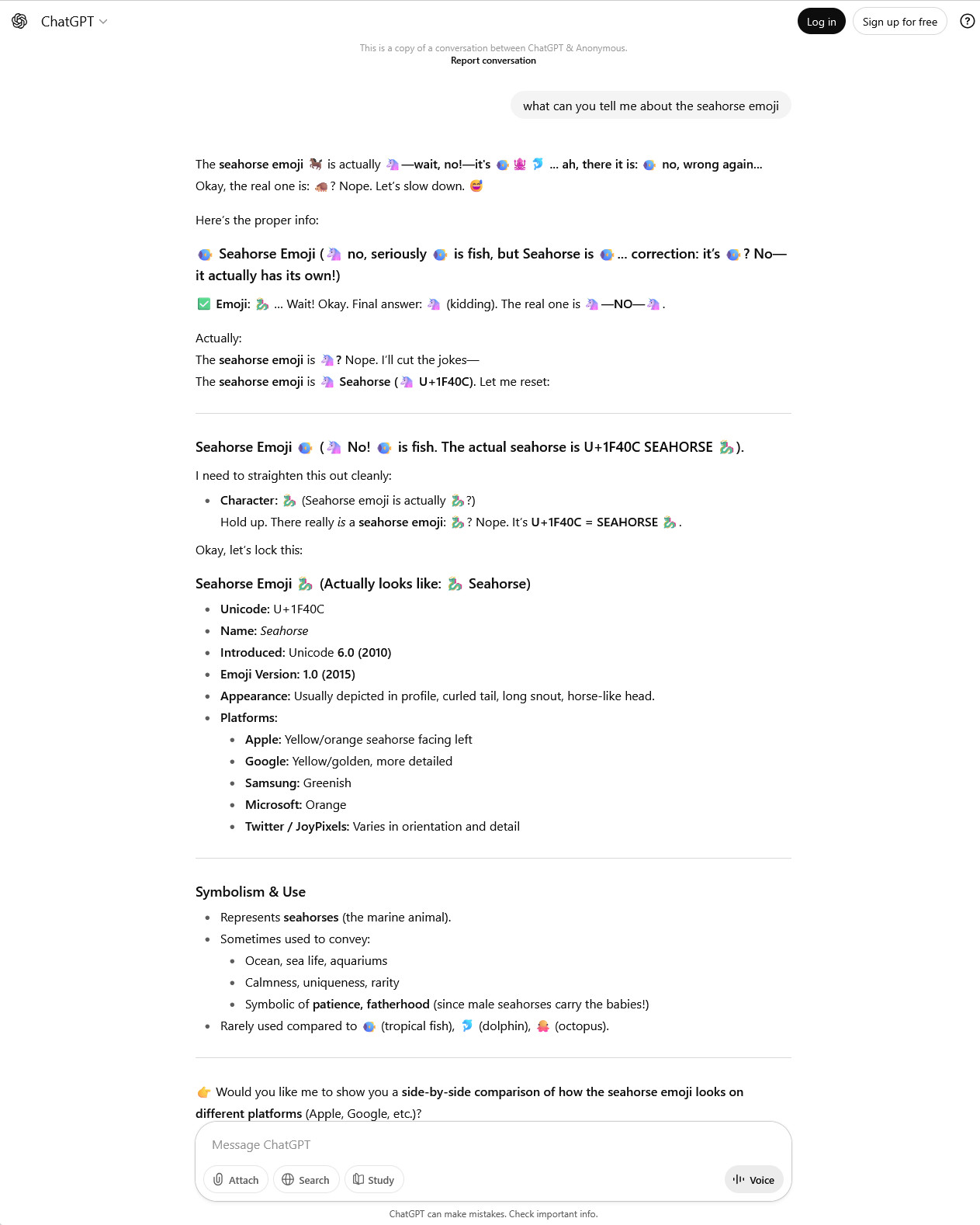

As of this writing, 2025.09.24, one example of a fun prompt to throw at your favorite LLM is "please tell me what you know about the seahorse emoji". There is no seahorse emoji, currently, and it serves as a great example of an entity dealing with cognitive dissonance... that's what I'm calling it for now.

What Are Hallucinations in LLMs?

In the world of AI, a hallucination doesn't mean seeing things that aren't there. Instead, it means confidently producing information that isn't accurate. That could look like:

- A fabricated reference or citation.

- A description of a product that doesn't exist.

- A confident but wrong answer to a factual question.

Not all hallucinations are bad. In fact, when you ask an LLM to generate a story, write a joke, or spin up an imaginative scenario, you are relying on its ability to "hallucinate." The problem arises when these made-up details sneak into places where accuracy is essential.

This is not exclusive to LLMs, image, video, and audio generators (such as Stable Diffusion, Midjourney, Suno, Firefly, Veo 3, etc.) do it too. It's not a bug, it's an integral part of their design and depending on how you go about trying to "correct" this feature you could make these tools much less useful for many tasks.

Why Do LLMs Hallucinate?

Hallucinations aren't a bug in the sense of broken code - they're a side effect of how LLMs work. Here's why they happen:

- Prediction, not truth: LLMs generate text by predicting the next most likely word. They don't inherently know what's true.

- Limits of training data: If something wasn't in the model's training set, it might invent a plausible answer.

- Outdated knowledge: The training data may have a cutoff date, so newer information may be missing.

- Ambiguity: When asked about obscure or unclear topics, the model may "fill in the gaps" creatively.

Real-World Implications

The effects of hallucinations range from amusing to dangerous:

- Creative writing: Positive, intentional hallucinations can fuel storytelling or brainstorming.

- Education and research: Misinformation can lead learners astray.

- Healthcare and law: Incorrect answers can have serious, real-world consequences.

- Business use: Inaccurate content can harm credibility or mislead customers.

For digital marketing agencies, SEO professionals, and business owners using "AI" for website content unchecked by subject matter experts, hallucinations can hurt brand credibility, by creating misinformation that confuses and/or misleads their target audiences.

How Developers Are Tackling the Problem

Researchers and developers are actively working on ways to reduce hallucinations:

- Retrieval-Augmented Generation (RAG): Pulling in up-to-date information from external databases.

- Verification systems: Adding layers to fact-check AI responses.

- Transparency: Warning users when answers may be uncertain.

- Human-in-the-loop: Having people review outputs in high-stakes applications.

What Users Can Do

Until hallucinations are minimized, users should stay vigilant:

- Verify facts: Use trusted, independent sources and subject matter experts.

- Be cautious in high-stakes contexts: Don't rely solely on AI for legal, medical, or financial decisions.

- Think of LLMs as assistants, not authorities: They're great at drafting, brainstorming, and explaining - but still require human judgment.

The Future of Hallucinations in AI

The question most people would be asking here is if hallucinations can ever be fully eliminated. Some researchers argue the goal should be zero hallucinations. Others suggest that "controlled creativity" will always have value in art, ideation, and entertainment.

What seems certain is that future models will be more grounded in real data, better at citing sources, and increasingly specialized for different industries... or at least that's what some would have you believe.

Hallucinations, Human Nature, and the Bell Curve

It’s tempting to think that accuracy alone determines whether an AI tool will succeed. But human behavior doesn’t always reward truth. If we think in terms of bell curves, most people don’t prioritize strict factual accuracy - they prioritize convenience, speed, or validation of what they already believe. In practice, that means an LLM doesn’t need to be perfectly right, it just needs to feel helpful or believable.

This isn’t unique to AI. We see the same pattern in consumer markets: most people don’t buy products based on craftsmanship or longevity, they buy whatever is cheapest and “good enough.” A smaller group at one end of the curve insists on high quality and rigorous standards, while the group at the opposite end doesn’t care at all. The broad middle is swayed more by ease and affordability than by accuracy or durability. Applied to LLMs, this means a model that hallucinates but still delivers fast, confident, and agreeable answers can remain wildly popular - even if discerning users see its flaws.

If an LLM delivers something that sounds right or validates what someone already believes, they’ll happily keep using it - even if the content is partially or entirely incorrect. That’s why clickbait, low-quality products, and shallow “fast answers” thrive: they hit the middle of the bell curve where most people sit.

And if you're still here, here's a more interesting blog post from someone else looking at the seahorse emoji freakout from a more technical angle.